A Speculative Exploration of Generative AI, Artificial Intimacy, Artificial Unintelligence, and the Uncanny.

Siobhan O’Flynn @copyright2024. Project website: E-Mote AI

Research Question:

E-Mote AI asks: What might be the effects (social, political, cultural) of the uncritical and unregulated adoption of generative AI Chatbots, assistants, and companions?

How might creating a fictional start-up company in this sector provoke questions, discussions, and critical engagement with the many concerns and benefits as they can be identified?

E-Mote AI: An Exploration of Generative AI, Artificial Intimacy, Artificial Unintelligence, and the Uncanny is a transmedia project currently in beta utilizing the methodologies of speculative futures and critical design to explore the emergent logics, industry practices, and implications of the rush to bring to market AI mental wellness apps, assistants, companions. This project situates today’s chatbots in a continuum of automata such as the 18th century automaton, the Mechanical Turk (Ashford, 2017)) and responses to automata & AI via Joseph Weizenbaum’s caution on the “powerful delusional thinking in quite normal people” he observed in responses to Doctor, the Eliza Chatbot (Weizenbaum, 1967, p.7). The website for E-Mote AI is designed to simulate a mental wellness start-up launching customizable and personalized AI ChatBots for education, industry & HR. The text uses the marketing copy style churned out by ChatGPT to replicate the aspirational claims common in this sector, here generated by me through multiple revisions and curations of my prompts. The AI generated text cascades in euphorically hollow metaphors and stylistic flourishes, absent of any evidence in peer-reviewed medical studies or vetted journals. The project is designed for a general audience and not specialists in fields such as STS, critical code studies, digital humanities, or digital media studies.

E-Mote AI targets sectors rapidly shifting to AI as more efficient and less costly than “humane work” and “connective labor” (Pugh, 2022), with two iterations of ESAA, the Employee Sentiment Analysis Assistant and ESAA, the Empathetic Student Anxiety Assistant. The website includes simulated video avatars, generated through cross-posting output between Midjourney and LivingAI when it was available on ChatGPT4. The copy for the videos was scripted by me.

My intention is for users to experience E-Mote AI as a provocation to what is now becoming our status quo, now with the race for data for Large Language Models (LLMs) from OpenAI to Siri. The concept and design draw on methodologies from speculative critical design (Bratton, 2016; Dunne and Raby, 2013; Haraway, 2011; Jain, 2019; Candy and Watson, 2013) in order to simulate an encounter with AI Chatbots that invite intimacy that are designed to quell uneasiness. while simultaneously (hopefully) raising uneasiness. Dunne and Raby set out the value of critical design in their work Speculative Everything, in a passage worth quoting in full:

Design as critique can do many things—pose questions, encourage thought, expose assumptions, provoke action, spark debate, raise awareness, offer new perspectives, and inspire. And even to entertain in an intellectual sort of way. But what is excellence in critical design? Is it subtlety, originality of topic, the handling of a question? Or something more functional such as its impact or its power to make people think? Should it even be measured or evaluated? It’s not a science after all and does not claim to be the best or most effective way of raising issues.

Critical design might borrow heavily from art’s methods and approaches but that is it. We expect art to be shocking and extreme. Critical design needs to be closer to the everyday; that’s where its power to disturb lies. A critical design should be demanding, challenging, and if it is going to raise awareness, do so for issues that are not already well known. Safe ideas will not linger in people’s minds or challenge prevailing views but if it is too weird, it will be dismissed as art, and if too normal, it will be effortlessly assimilated. If it is labeled as art it is easier to deal with but if it remains design, it is more disturbing; it suggests that the everyday life as we know it could be different, that things could change.

For us, a key feature is how well it simultaneously sits in this world, the here-and-now, while belonging to another yet-to-exist one. It proposes an alternative that through its lack of fit with this world offers a critique by asking, “why not?” If it sits too comfortably in one or the other it fails. That is why for us, critical designs need to be made physical. (2012, p. 43)

E-Mote AI is meant to “[sit] in this world, the here-and-now,” of today’s online environment and the AI tools and services that obfuscate the data capture propelled by for-profit goals. The irony of the design of E-Mote AI is that the advances in AI and acceleration of adoption now mean that this project is a present reality, though not yet widely understood, as the potential ramifications are unrecognized and often ill-defined.

Rather than aiming for a seamlessly palatable experience, my hope is that the repetitive hyperbole of the grandiose style paired with the occasional glitches can raise user concerns, drawing attention to cracks in the superficiality of understanding of AI Chatbots. Further, I hope that the frustrations experienced in encounters with the bespoke Poe Bot will serve as reminders of the lack of interpretability of the computational processes determining the output, while also providing indicators to gauge the limits and guardrails present in the coding, trackable in what is and isn’t recognized, and the algorithmic biases that may appear. Where the Wachowskis’ 1999 film, The Matrix, visualized this as freezes and glitches in the VR simulation, and the dramatic green cascades of computer code, today’s encounters materialize as friendly assistants on our devices, in our infrastructures, networks, and any system that is functionally reliant on algorithmic processes and decisions.

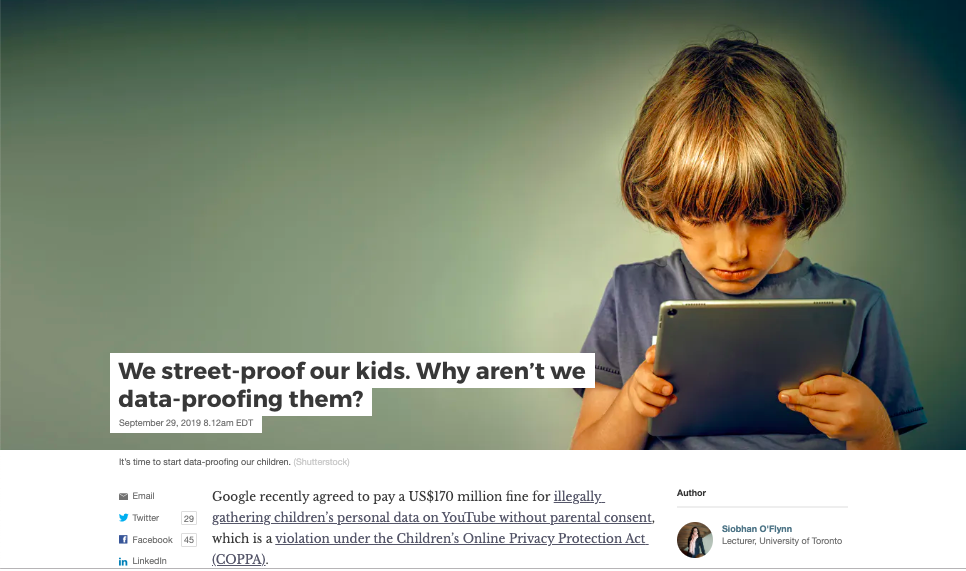

I choose this sector of AI development to highlight the logics of existing AI Chatbots (Pi, Replika, Woebot, Poe, KintsugiHealth), and services and as a provocation to encourage discussion, examination, and optimally, regulation, of what Zuboff has termed “the instrumentalization of data” (2019) now being mined from personal, intimate, and therapeutic realms. The rush to capitalize this new frontier of data is significantly unregulated, largely operating outside of the FDA in the US, and current and proposed AIDA regulation in Canada. Companies such as such as Calm and Woebot (apps), KintsugiHealth and Supermanage (sentiment analysis via HR & Slack respectively) all bypass the much more expensive, time consuming and heavily regulated requirements for mental health apps and services.

KintsugiHealth is of particular concern in that the AI analyzes audio biomarkers at increments too fine for human perception to enable early interventions pre-crisis, self-designate as mental wellness services. As Zuboff has warned, these new industries of data capture and knowledge production are fundamentally political: “The result is that these new knowledge territories become the subject of political conflict. The first conflict is over the distribution of knowledge: “Who knows?” The second is about authority: “Who decides who knows?” The third is about power: “Who decides who decides who knows?” (Naughton, 2019).

The proliferation of intimate data harvesting technologies such as these should be framed as a rights issue ensuring the user / client /employee’s agency to decide whether to opt-in or not as to data collection and processing. Further, full transparency and accountability as to data use, third party sharing, internal and external data audits, must be in place as we are entering into a period of mass scale social experiment run by for-profits without ethical oversight.

E-Mote AI is designed to consider the short and long-term implications of algorithmic therapists and companions, drawing on insights from Dr. Esther Perel has warned of the socio-cultural dangers of the “other AI in artificial intimacy” (TEDTalk, 2023). The intention of this project is not just to prompt despair and apathy at a rapidly approaching dystopian near-future, instead my goal is to emphasize our capacity to choose differently. Dunne and Raby outline the importance of “critical design” as an optimistic intervention towards better futures, writing: “All good critical design offers an alternative to how things are. It is the gap between reality as we know it and the different idea of reality referred to in the critical design proposal that creates the space for discussion. It depends on dialectical opposition between fiction and reality to have an effect. Critical design uses commentary but it is only one layer of many” (2013, p. 35). E-Mote AI embodies the “shiny thing” effect characteristic of generative AI tools and services as the wonder machines of our age. Hopefully, users are not lulled, wooed, and distracted, and instead might pause to consider the implications and questions raised by today’s cyber automata.

Works Cited

Ashford, D. (2017). The Mechanical Turk: Enduring Misapprehensions Concerning Artificial Intelligence. The Cambridge Quarterly, 46(2), 119-139.

https://doi.org/10.1093/camqtly/bfx005

Bratton, B. H. (2016). On Speculative Design. Dis Magazine. February.

http://dismagazine.com/discussion/81971/on-speculative-design-benjamin-h-bratton/. Accessed 10 August 2019.

Broussard, M. (2018). Artificial Unintelligence: How Computers Misunderstand the World. MIT Press.

Candy, S., and Watson, J. (2013-ongoing). The Situation Lab. https://situationlab.org/.

Accessed 2013.

Calm. Accessed 2017. https://www.calm.com/

Dunne, A., and Raby, F. (2013). Speculative Everything: Design, Fiction, and Social Dreaming. MIT Press.

Haraway, D. (2011) Speculative Fabulations for Technoculture’s Generations: Taking Care of Unexpected Country. Australian Humanities Review, no. 50, 2011.

Accessed 12 June 2013.

Inflection AI, Inc. (2023). Pi.AI https://pi.ai/talk Accessed May 5 2023.

Jain, A. (2019). Calling for a more-than-human politics: A field guide that can help us move towards the practice of a more-than-human politics. Superflux.

Accessed 17 September 2021.

Kintsugi Mindful Wellness, Inc. (2022). Kintsugi. https://www.kintsugihealth.com/ Accessed November 12, 2022.

Loveless, N. (2019). How to Make Art at the End of the World: A Manifesto for Research- Creation. Duke University Press.

Naughton, J. (2019). The Goal is to Automate Us: Welcome to the Age of Surveillance Capitalism. The Guardian. Sunday 20 January. https://www.theguardian.com/technology/2019/jan/20/shoshana-zuboff-age-of-surveillance-capitalism-google-facebook Accessed January 20 2019.

Perel, E. (2023). Esther Perel on The Other AI: Artificial Intimacy | SXSW 2023. March 31.

ttps://youtu.be/vSF-Al45hQU?si=WFvJOy7pnzWu0x5a Accessed April 9, 2023.

Poe…COMPLETE

Pugh, A.J. (2022). Constructing What Counts as Human Work: Enigma, Emotion, and Error in Connective Labor. American Behavioural Scientist. Vol. 67.14. Accessed Nov. 18, 2023.

https://doi.org/10.1177/00027642221127240

Replika…COMPLETE

Supermanage….COMPLETE

Wachowski, L., & Wachowski, L. (1999). The Matrix. Warner Bros.

Weizenbaum, J. (1967). Contextual understanding by computers. Communications of the ACM, 10(8). http://www.cse.buffalo.edu/~rapaport/572/S02/weizenbaum.eliza.1967.pdf

Weizenbaum, J. (1976). Computer Power and Human Reason: From Judgment to Calculation.

Woebot Health (2017). Woebot. https://woebothealth.com/ Accessed Oct. 14 2022.

Zuboff, S. (2018). The Age of Surveillance Capitalism: The Fight for a Human Future at the New Frontier of Power. Profile Books.

Siobhan O’Flynn is a deeply respected researcher, instructor, mentor, and colleague having taught at the University of Toronto and University of Toronto Mississauga for over two decades. Her work in digital media and interactive storytelling began in 2001 with the Canadian Film Centre’s Interactive Art and Entertainment Program, later the CFC Media Lab.

She is widely acclaimed by students as a mentor who cares deeply for student experience and learning.